Background

Navigation has become standard of care for several surgical areas, such as neurosurgical, orthopedic and spinal procedures. Whereas such navigation enables the use of pre-operative imaging and planning during the procedure, the hand-eye coordination and the need to switch focus (from the operative field to the navigation screen back-and-forth) are still considered drawbacks. The recent introduction of Augmented Reality (AR) devices permits for more immersive navigation approaches, where the pre-operative information and planning is directly visualized in the field-of-view of the surgeon. Such approaches have great potential to improve surgical procedures and may both enable better training and deliver safer surgery.

Main objectives

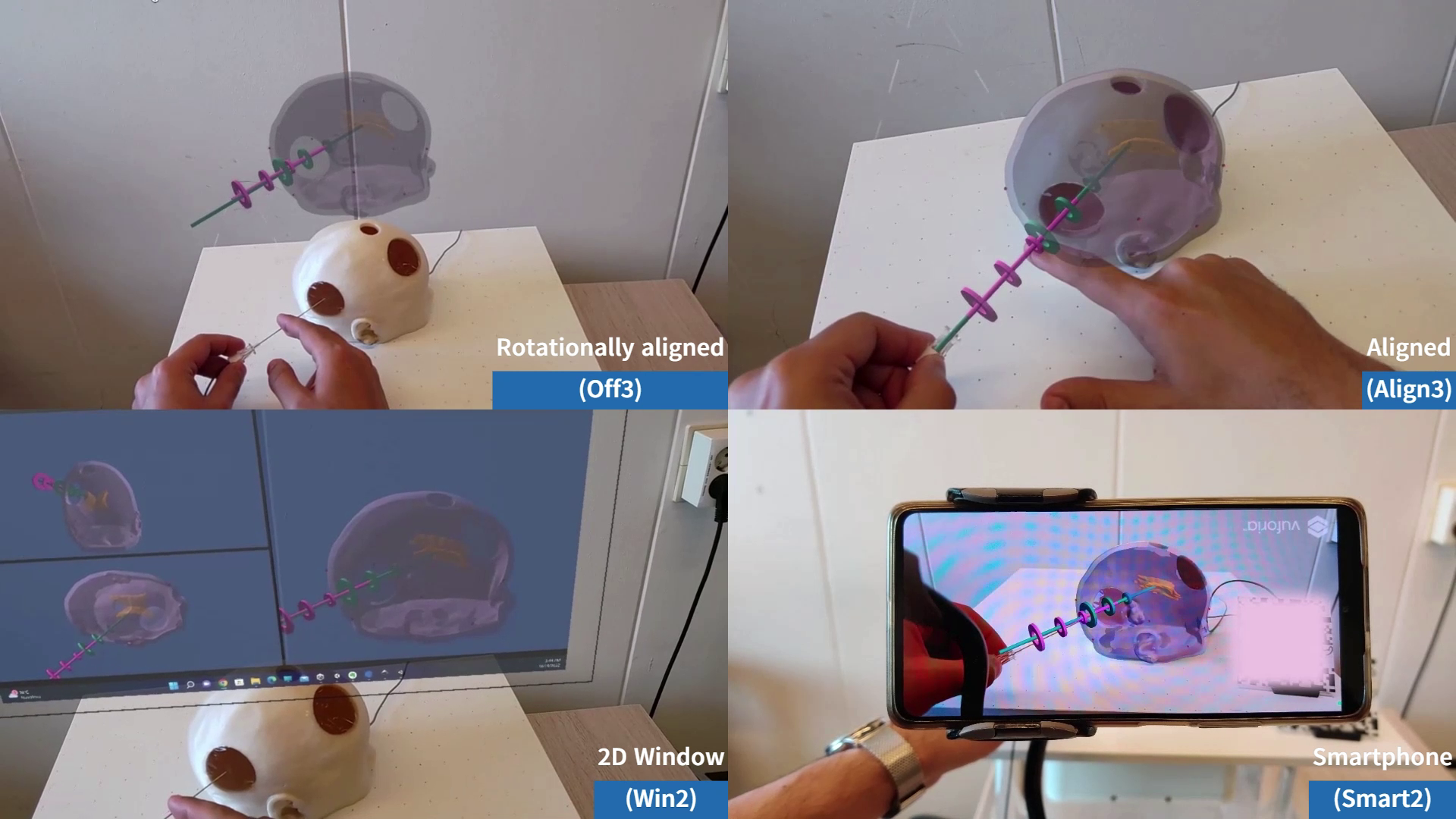

1- Develop and assess vision-based AR navigation systems, where an AR headset such as Microsoft HoloLens can be used for both tracking and visualization. The project focuses on assessing and developing augmented reality based key components required for navigation, such as: patient-to-model alignment, patient and surgical instruments tracking, and the visualization of the virtual model in the surgical scene.

2- Bring AR solutions to the operating room to help in surgery planning and navigation.